Whisper is open source. GPT-2 was, too.

Whisper is open source. GPT-2 was, too.

Absolutely this. Phones are the primary device for Gen Z. Phone use doesn’t develop tech skills because there’s barely anything you can do with the phones. This is particularly true with iOS, but still applies to Android.

Even as an IT administrator, there’s hardly anything I can do when troubleshooting phone problems. Oh, push notifications aren’t going through? Well, there are no useful logs or anything for me to look at, so…cool. It makes me crazy how little visibility I have into anything on iPhones or iPads. And nobody manages “Android” in general; at best they manage like two specific models of one specific brand (usually Samsung or Google). It’s impossible to manage arbitrary Android phones because there’s so little standardization and so little control over the software in the general case.

Is this legit? This is the first time I’ve heard of human neurons used for such a purpose. Kind of surprised that’s legal. Instinctively, I feel like a “human brain organoid” is close enough to a human that you cannot wave away the potential for consciousness so easily. At what point does something like this deserve human rights?

I notice that the paper is published in Frontiers, the same journal that let the notorious AI-generated giant-rat-testicles image get published. They are not highly regarded in general.

DuckDuckGo is an easy first step. It’s free, publicly available, and familiar to anyone who is used to Google. Results are sourced largely from Bing, so there is second-hand rot, but IMHO there was a tipping point in 2023 where DDG’s results became generally more useful than Google’s or Bing’s. (That’s my personal experience; YMMV.) And they’re not putting half-assed AI implementations front and center (though they have some experimental features you can play with if you want).

If you want something AI-driven, Perplexity.ai is pretty good. Bing Chat is worth looking at, but last I checked it was still too hallucinatory to use for general search, and the UI is awful.

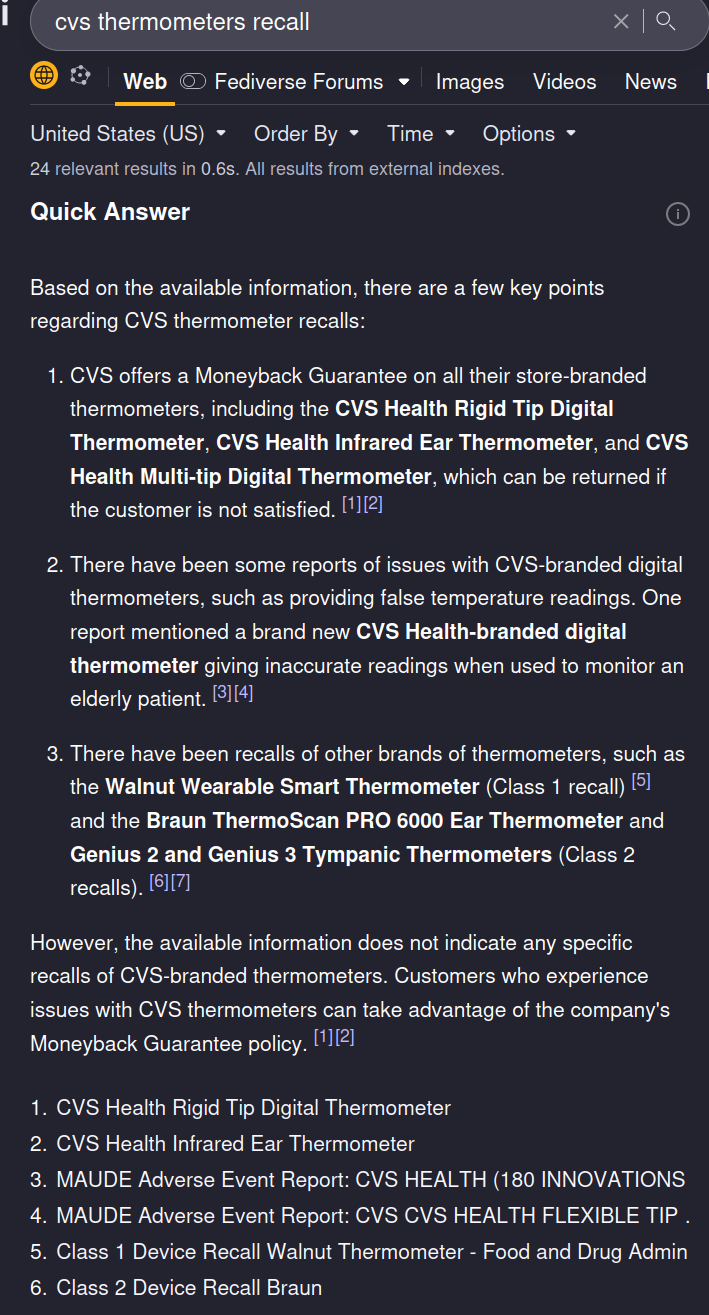

I’ve been using Kagi for a while now and I find its quick summaries (which are not displayed by default for web searches) much, much better than this. For example, here’s what Kagi’s “quick answer” feature gives me with this search term:

Room for improvement, sure, but it’s not hallucinating anything, and it cites its sources. That’s the bare minimum anyone should tolerate, and yet most of the stuff out there falls wayyyyy short.

“Smart” may as well be synonymous with “unpredictable”. I don’t need my computer to be smart. I need it to be predictable, consistent, and undemanding.

Thanks! I didn’t see that. Relevant bit for convenience:

we call model providers on your behalf so your personal information (for example, IP address) is not exposed to them. In addition, we have agreements in place with all model providers that further limit how they can use data from these anonymous requests that includes not using Prompts and Outputs to develop or improve their models as well as deleting all information received within 30 days.

Pretty standard stuff for such services in my experience.

I’m not entirely clear on which (anti-)features are only in the browser vs in the web site as well. It sounds like they are steering people toward their commercial partners like Binance across the board.

Personally I find the cryptocurrency stuff off-putting in general. Not trying to push my opinion on you though. If you don’t object to any of that stuff, then as far as I know Brave is fine for you.

Short answer: inserting affiliate links into results, and weird cryptocurrency stuff. https://www.theverge.com/2020/6/8/21283769/brave-browser-affiliate-links-crypto-privacy-ceo-apology

I don’t know if that’s “worse than Microsoft” because that’s a real high bar. But it’s different anyway.

If you click the Chat button on a DDG search page, it says:

DuckDuckGo AI Chat is a private AI-powered chat service that currently supports OpenAI’s GPT-3.5 and Anthropic’s Claude chat models.

So at minimum they are sharing data with one additional third party, either OpenAI or Anthropic depending on which model you choose.

OpenAI and Anthropic have similar terms and conditions for enterprise customers. They are not completely transparent and any given enterprise could have their own custom license terms, but my understanding is that they generally will not store queries or use them for training purposes. You’d better seek clarification from DDG. I was not able to find information on this in DDG’s privacy policy.

Obviously, this is not legal advice, and I do not speak for any of these companies. This is just my understanding based on the last time I looked over the OpenAI and Anthropic privacy policies, which was a few months ago.

I’ve been using Kagi for a while, so I’ll post a few quick thoughts I had after reading the article, linked blog, and mastodon thread.

The one thing in the blog post I strongly disagree with is her statement that the summarizer is “the same old AI bullshit”. I think they just assumed that without actually testing it. The summarizer is fantastic, and is a great example of the right way to use LLMs. Its output comes entirely from the URL or file you specify. It does not hallucinate. You can ask it follow-up questions about the document, and again, its replies are limited in scope to what’s actually in that document. If you ask it something out of scope it’ll tell you that it can’t find that information in the document. This is great because it’s using the LLM for what LLMs are actually good for — complex language parsing — and not for what they’re bad for, like reasoning or information storage/retrieval. It’s actually quite difficult to misuse the summarizer. It’s straightforward and effective. This is Kagi’s killer feature, IMO.

I can’t speak as highly of its search-integrated AI features like FastGPT. They mostly take information from the contents of the first few search results, but they also seem to “fill in the blanks” more than I’d like. Sometimes it gives me information that is simply not in the sources that it cites. It’s not as bad as using ChatGPT (which hallucinates all day every day, and if it ever tries to cite source is hallucinates those, too) but it needs improvement.

That said, Kagi doesn’t shove the AI down your throat like you might think reading the blog post. These are separate features that need to be explicitly activated. Plain searches don’t return results from the LLMs. If you want that, you need to explicitly go to the assistant or trigger the “quick answer” feature on the search results page. EDIT: I just realized that this is not totally true as of a few weeks ago, when they added a feature to automatically trigger the “quick answer” blurb for search queries ending in a question mark. Perhaps that’s why Lori felt like it was over-emphasized.

Anyway, back to the summarizer, here is an example of it in action. I gave it the URL of the Mastodon post. I think this is an excellent result. I also asked it an unrelated followup question so you can see that it limits itself to the information in the URL. It will not make shit up!

The summarizer lets me download conversations in markdown form, so I’ll just paste it right in here so you can see.

Exploring https://hackers.town/@lori/112255132348604770

Assistant:

Key points:

Query:

What’s the capital of North Dakota?

Assistant:

The knowledge provided does not contain any information about the capital of North Dakota. The context is focused on an email exchange between the author and the CEO of Kagi search engine regarding criticism of the company.

Correct. This is also why Apple switched to zsh as the default shell over bash. They still ship Bash 3.2 in macOS, because from 4.0 on, Bash started using GPLv3 instead of GPLv2.

I’m not against the idea of creating proprietary software out of open-source software, if the license allows that. However, I am always against this practice of “closing the door behind you”.

Apple: builds their entire software ecosystem on free, open-source foundations.

Also Apple: better have a million euros if you want to even start distributing software.

The best use case for an external app store is free open-source software, like we have on the Android side with F-Droid. Apple stopped that before it even started. Jeez.

Yeah, I wouldn’t be too confident in Facebook’s implementation, and I certainly don’t believe that their interests are aligned with their users’.

That said, it seems like we’re reaching a turning point for big tech, where having access to private user data becomes more of a liability than an asset. Having access to the data means that they will be required by law to provide that data to governments in various circumstances. They might have other legal obligations in how they handle, store, and process that data. All of this comes with costs in terms of person-hours and infrastructure. Google specifically cited this is a reason they are moving Android location history on-device; they don’t want to deal with law enforcement constantly asking them to spy on people. It’s not because they give a shit about user privacy; it’s because they’re tired of providing law enforcement with free labor.

I suspect it also helps them comply with some of the recent privacy protection laws in the EU, though I’m not 100% sure on that. Again, this is a liability issue for them, not a user-privacy issue.

Also, how much valuable information were they getting from private messages in the first place? Considering how much people willingly put out in the open, and how much can be inferred simply by the metadata they still have access to (e.g. the social graph), it seems likely that the actual message data was largely redundant or superfluous. Facebook is certainly in position to measure this objectively.

The social graph is powerful, and if you really care about privacy, you need to worry about it. If you’re a journalist, whistleblower, or political dissident, you absolutely do not want Facebook (and by extension governments) to know who you talk you or when. It doesn’t matter if they don’t know what you’re saying; the association alone is enough to blow your cover.

The metadata problem is common to a lot of platforms. Even Signal cannot use E2EE for metadata; they need to know who you’re communicating with in order to deliver your messages to them. Signal doesn’t retain that metadata, but ultimately you need to take their word on that.

Any Safari extensions installed that might be interfering with this behavior? That’s the best I can figure.

Bell Riots are coming this year. The Second American Civil War starts in 2026, which leads directly into WWIII.

From there, everything is pretty much terrible until warp drive is invented.

Oh, gotcha. I misunderstood and thought you were describing a Chrome-vs-Firefox difference specifically. Yeah, I can relate. I’m de-googling my life but I’m not sure I’ll ever be 100% de-googled. I’m taking it bit by bit. I sign up for new things with different email addresses now and occasionally I’ll change existing services if it’s possible. But there’s no way I’m going to go through my bajillion web site accounts and move them all.

I don’t understand the problem. Google services work in Firefox pretty much the same way, yeah? Does Chrome integrate an authenticator app? If som you might want change your 2FA settings at https://myaccount.google.com/security . If you have an Android phone you can get push notifications on it, or you can also use third-party authenticator apps.

It would be great if the frontend and backend were separated with a unified API and you could simply choose a frontend/interface (Vivaldi) with whatever backend/engine (Gecko). That’s not how it (currently) works though.

Arc has floated this idea. Currently Arc is Chromium-based, but they say they’ve designed it to allow for swapping engines in the future.

IIRC, Edge had a similar feature for a while, allowing you to run legacy Internet Explorer tabs if a site required it. Not sure if that still exists.

Firefox syncs across devices as well, if you sign up for a Firefox account and enable sync. This works for bookmarks, logins, history, and you can even access remote tabs if you want. It’s also easy to send a single page from one device to another.

On desktop, Firefox has an import feature that will pull your bookmarks and logins m other browsers (like Chrome) into your Firefox profile.

Even if you’re neck-deep in Google services, Chrome doesn’t do anything special.

Also worth mentioning: you might still need to add the “most recent visit” column under the View menu. And if you dare to actually load any of those pages, they’ll move all the way to the top, and will not remain in their original location. It’s really annoying.